|

3/1/2024 0 Comments Hosted postgresqlCreate a Tinybird Data Source to store Postgres CDC events.Set up the Confluent Cloud Postgres CDC Connector.Set up a Confluent Cloud stream cluster.Confirm PostgreSQL server is configured for generating CDC events.There are five fundamental steps for setting up the CDC event stream: Setting up the CDC data pipeline from Postgres to Tinybird This means you don’t have to worry about esoteric PostgreSQL details like replication slots, logical decoding, etc. The Connector also handles the initial snapshot of the table along with streaming the change events, simplifying the overall process. The Connector will then write those events to a Kafka stream and auto-generate a Kafka Topic name based on the source database schema and table name. These changes can then be propagated to other systems or databases, ensuring they have near real-time updates of the data.įor this guide, we will be using the Confluent Postgres CDC Source Connector to read CDC events from the WAL in real time.

CDC tools read from this log, capturing changes as they occur, getting real-time change streams without placing strain on the Postgres server.ĬDC processes monitor this WAL, capturing the changes as they occur. Postgres CDC is driven by its Write-Ahead Logging. Anytime data is inserted, updated, or deleted, it is logged in the WAL. The WAL maintains a record of all database updates, including when changes are made to database tables. PostgreSQL CDC is driven by its Write-Ahead Logging (WAL), which also supports its replication process. To learn more about building API endpoints with custom query parameters using Tinybird, check out this blog post.

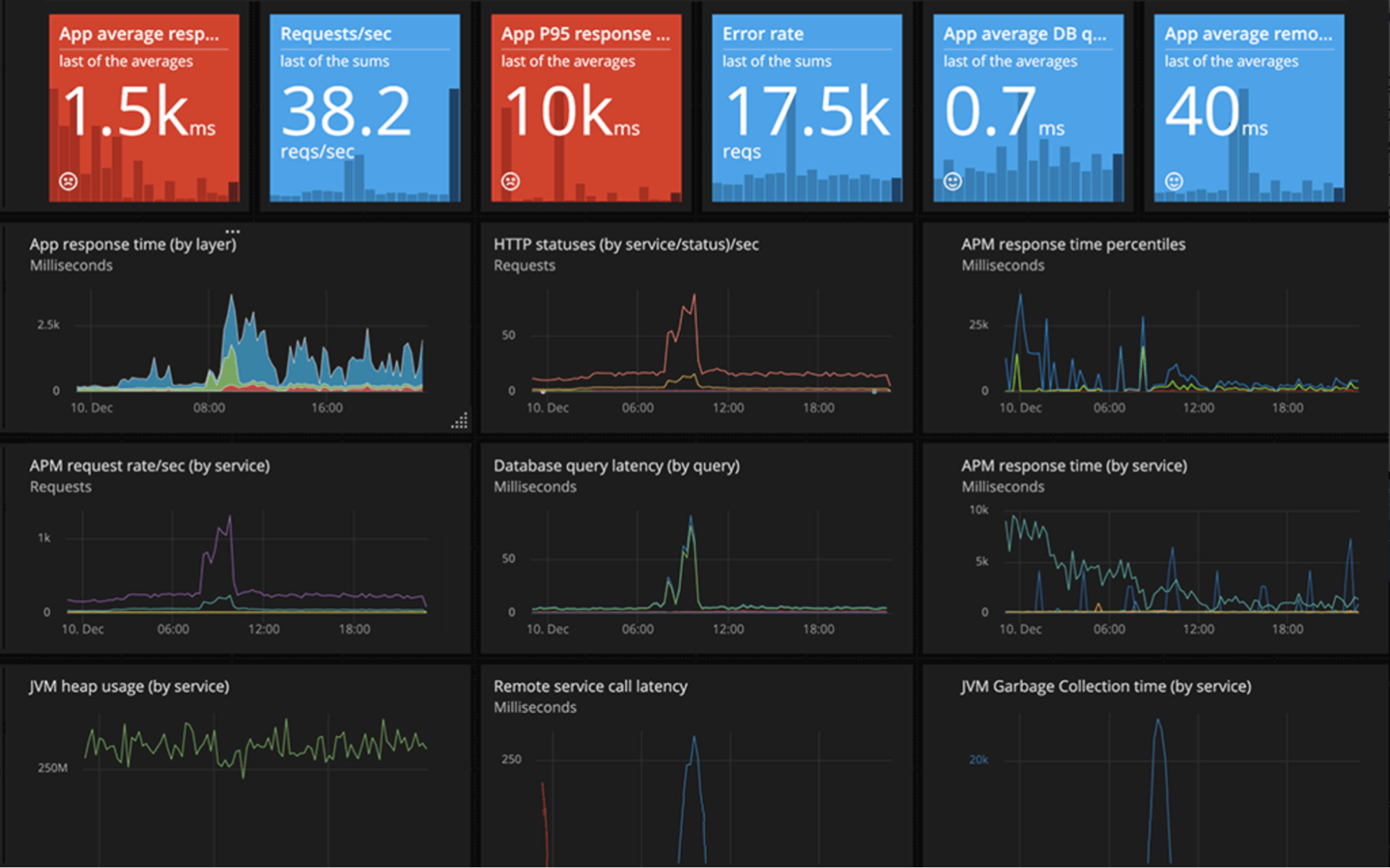

In either case, Tinybird API endpoints serve downstream use cases powered by this real-time CDC pipeline. Why Tinybird? Tinybird is an ideal sink for CDC event streams, as it can be used to both run analytics on the event streams themselves and create up-to-the-second views or snapshots of Postgres tables. In this PostgreSQL CDC pipeline, the Confluent managed Postgres CDC Connector is used to stream change data into a destination, in this case Tinybird. On the other end of that stream is a Tinybird Data Source, which collects those events and stores them in a columnar database optimized for real-time analytics. In this case, I am hosting my Postgres database instance on Amazon Web Services (AWS) Relational Database Service (RDS), reading CDC events with the Debezium-based Confluent Postgres CDC Connector, and publishing the CDC events on a Confluent-hosted Kafka stream. To follow along, you will need access to a Postgres database that is generating changes that can become CDC events. There are many options for hosting PostgreSQL databases and streaming CDC events to Tinybird.

In addition, I’ll show you how to use Tinybird to provide an endpoint that returns current snapshots of the Postgres table as an eventually-consistent API. Tinybird also serves as a real-time data platform to run real-time analytics on your data change events. In this guide, I’ll show you how to build a real-time CDC pipeline using Postgres as a source database, Kafka Connect (via Confluent Cloud) as both the generator and the broadcaster of real-time CDC events, and Tinybird as the consumer of the CDC event streams. In this post, you'll learn how to build a real-time Postgres CDC pipeline using Confluent Cloud and Tinybird. In the context of PostgreSQL, CDC provides a method to share the change events from Postgres tables without affecting the performance of the Postgres instance itself. Change Data Capture (CDC) is a powerful technique used in event-driven architectures to capture change streams from a source data system, often a database, and propagate them to other downstream consumers and data stores such as data lakes, data warehouses, or real-time data platforms.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed